You don't know your TTV. I'm willing to bet on it.

Not because you're not tracking things. You probably have a dashboard full of signups, API calls, session lengths, and something labeled "activation rate" that nobody has ever cleanly defined. You're tracking plenty. You're just not tracking the right thing.

Here's why that matters: developers who don't reach value fast leave fast. And you won't know they were ever close until they're already gone.

The Problem With Activity Metrics

Most API founders measure activity and call it progress. Sound familiar?

You end up tracking things like time to first API call, number of calls in the first session, or completion of an onboarding checklist. These metrics tell you a developer showed up and touched your product. They don't tell you whether that developer got what they came for.

Here's where it breaks down. The developer who made 3 API calls and churned looks identical in your data to the developer who made 3 API calls and became a power user. Until you look at whether they hit your Value Moment.

You're measuring motion, not progress. That's the real problem.

What Is a Value Moment?

Your Value Moment is the specific interaction where a developer gets undeniable proof that your API does what they need it to do.

Not "they called the endpoint." Not "they saw a response." The moment they got a result that made them think: okay, this actually works for my use case.

It looks different for every product:

- For a payments API, it's the first successful test transaction in their dev environment

- For a speech-to-text API, it's the first transcript that returns with over 95% accuracy on their test audio

- For an AI completions API, it's the first response that matches their expected output format

The Value Moment is not generic. It's specific to what your product actually does. And it's almost always one concrete event, not a sequence.

Your TTV is the gap between signup and that moment. That's it.

The Value Moment Method: Define It, Track It, Fix It

You can define your Value Moment and start measuring TTV in an afternoon. Here's exactly how.

Step 1: Define Your Value Moment

Pull up data on your best-retained developers, the ones who've been active 60+ days. What did they do in the first 24 hours that churned developers almost never did?

You're looking for one identifiable event. If you can't access the data yet, interview three to five developers who successfully integrated your API. Ask them: "What was the moment you knew this was going to work?" Their answers will cluster around one or two specific interactions.

That cluster is your Value Moment. Write it down in plain language: "A developer successfully [specific action] with our API." That sentence is the foundation of everything else.

Step 2: Identify the Signal

Your Value Moment needs to map to something your system actually logs: an event, a response code, a specific API call.

For most APIs this is straightforward. If your Value Moment is "first successful test transaction," your signal is probably transaction.success with a test-mode flag. If it's "first accurate transcript returned," your signal might be a response with a confidence score above your threshold.

If your system doesn't log the signal you need, that's a one-line tracking addition, not an analytics overhaul. Add the event, tag it as your Value Moment signal, move on.

Step 3: Measure the Gap

TTV = time from signup to first Value Moment signal.

That's the whole formula. You're not averaging session lengths or weighting engagement scores. You're measuring one gap: how long does it take a developer to get their first undeniable proof your API works?

Track it for every new developer who signs up. Over time you'll have a distribution. What's the median? What's the 75th percentile? And the number that really matters: what percentage of developers hit the Value Moment at all within their first 7 days?

That last number explains your retention curve better than anything else in your dashboard.

What to Do With Your TTV Number

Your TTV number tells you two things immediately.

First, whether your onboarding is actually working. If the median TTV for developers who stay long-term is 18 minutes and your current median is 4 hours, that gap is your onboarding problem. Not your product. Your onboarding.

Second, where your intervention window is. Developers who don't hit the Value Moment in their first session almost never come back. That's not a judgment call, it's a pattern. Your job is to get them there before that window closes.

From here you optimize by working backward from the Value Moment. What's the friction between signup and that first signal? Where do developers drop off before they get there? Those are the specific things to fix. Not "improve the docs" or "add more examples" in the abstract, but the precise steps between where developers start and where they need to get.

See Your TTV Before It Shows Up in Your Churn Report

Here's the bottom line: defining your Value Moment takes an afternoon. Watching TTV data accumulate manually, correlating it against retention, turning it into something you can actually act on? That takes a lot longer.

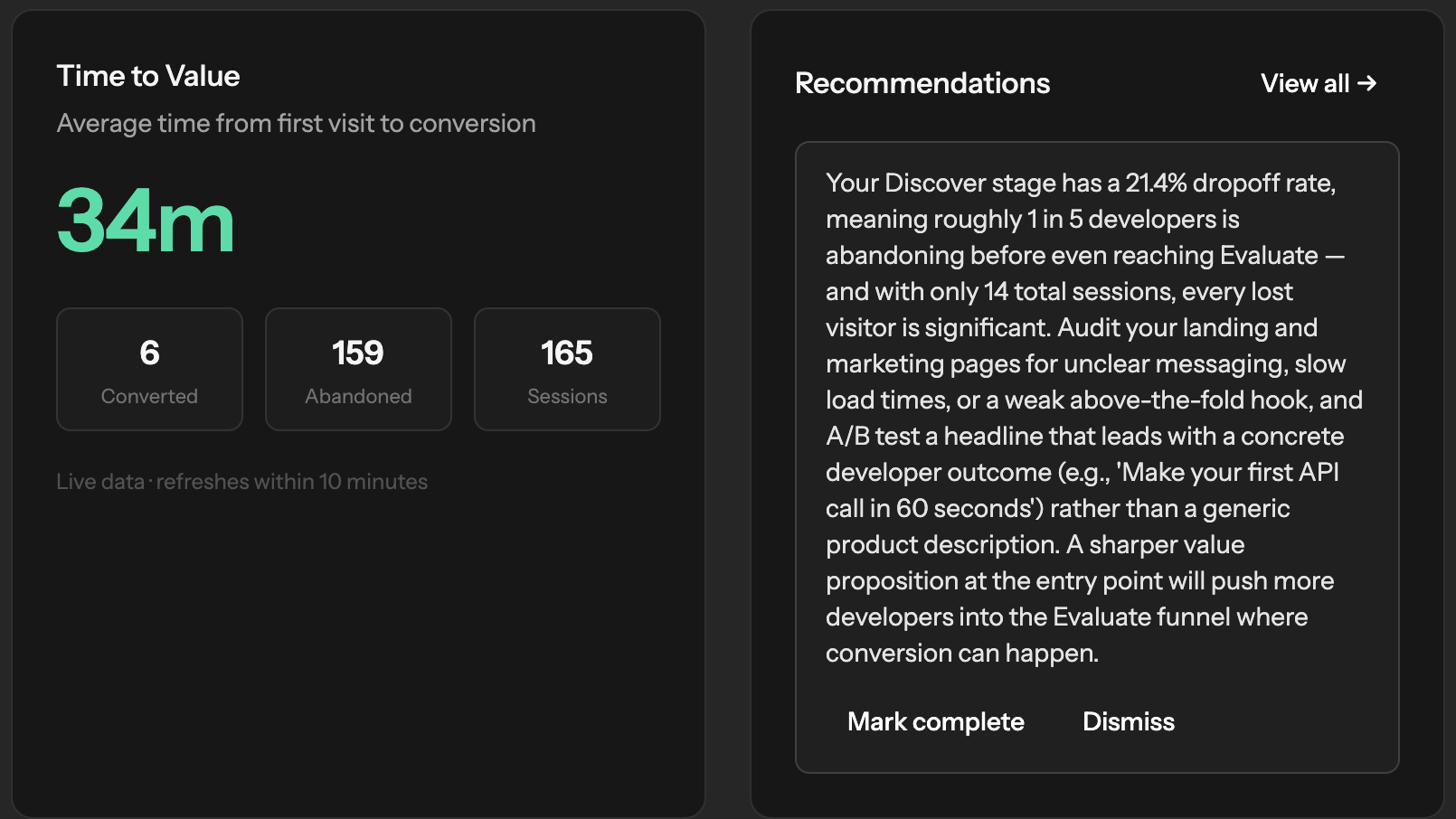

That's what Built for Devs handles for you. Sign up, define your Value Moment, and as new developers flow through your onboarding, you'll see your TTV in your dashboard automatically. No custom analytics pipeline required.

You don't need a data team to start. You need to define the right moment, then let the tool do the tracking.

Start measuring your developer TTV →